Google adds AI language understanding to Alphabet's helper robots

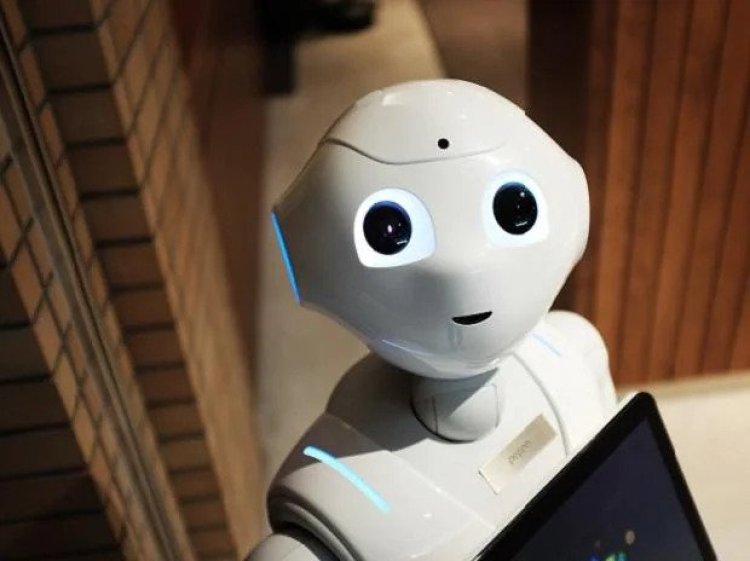

San Francisco: Google's parent company Alphabet is bringing together two of its most ambitious research projects -- robotics and AI language understanding -- to make a "helper robot" that can understand natural language commands.

According to The Verge, since 2019, Alphabet has been developing robots that can carry out simple tasks like fetching drinks and cleaning surfaces.

This Everyday Robots project is still in its infancy -- the robots are slow and hesitant -- but the bots have now been given an upgrade: improved language understanding courtesy of Google's large language model (LLM) PaLM.

Most robots only respond to short and simple instructions, like "bring me a bottle of water". But LLMs like GPT-3 and Google's MuM can better parse the intent behind more oblique commands.

In Google's example, you might tell one of the Everyday Robots prototypes, "I spilled my drink, can you help?" The robot filters this instruction through an internal list of possible actions and interprets it as "fetch me the sponge from the kitchen".

Google has dubbed the resulting system PaLM-SayCan, the name capturing how the model combines the language understanding skills of LLMs ("Say") with the "affordance grounding" of its robots.

Google said that by integrating PaLM-SayCan into its robots, the bots were able to plan correct responses to 101 user-instructions 84 per cent of the time and successfully execute them 74 per cent of the time.